MWC 2026: Qualcomm Expands Snapdragon Elite to Wearables for Contextual AI

Summary

At MWC, Qualcomm applied the Snapdragon Elite brand to a new SoC for wearables expected to power pendants, pins, watches, and other form factors for AI-driven experiences. It’s an ambitious concept that will require changes in software, use cases, and social norms. The key is that humans need to be comfortable wearing them. This isn't (just) about weight, battery life, or explaining why having a device that provides context to AI is worth paying for and wearing. It's about getting people to trust that these devices will respect privacy and social norms so that they will give the devices a chance to overcome all the usual (and solvable) device tech issues. Suggestions on how to do this follow.

Context

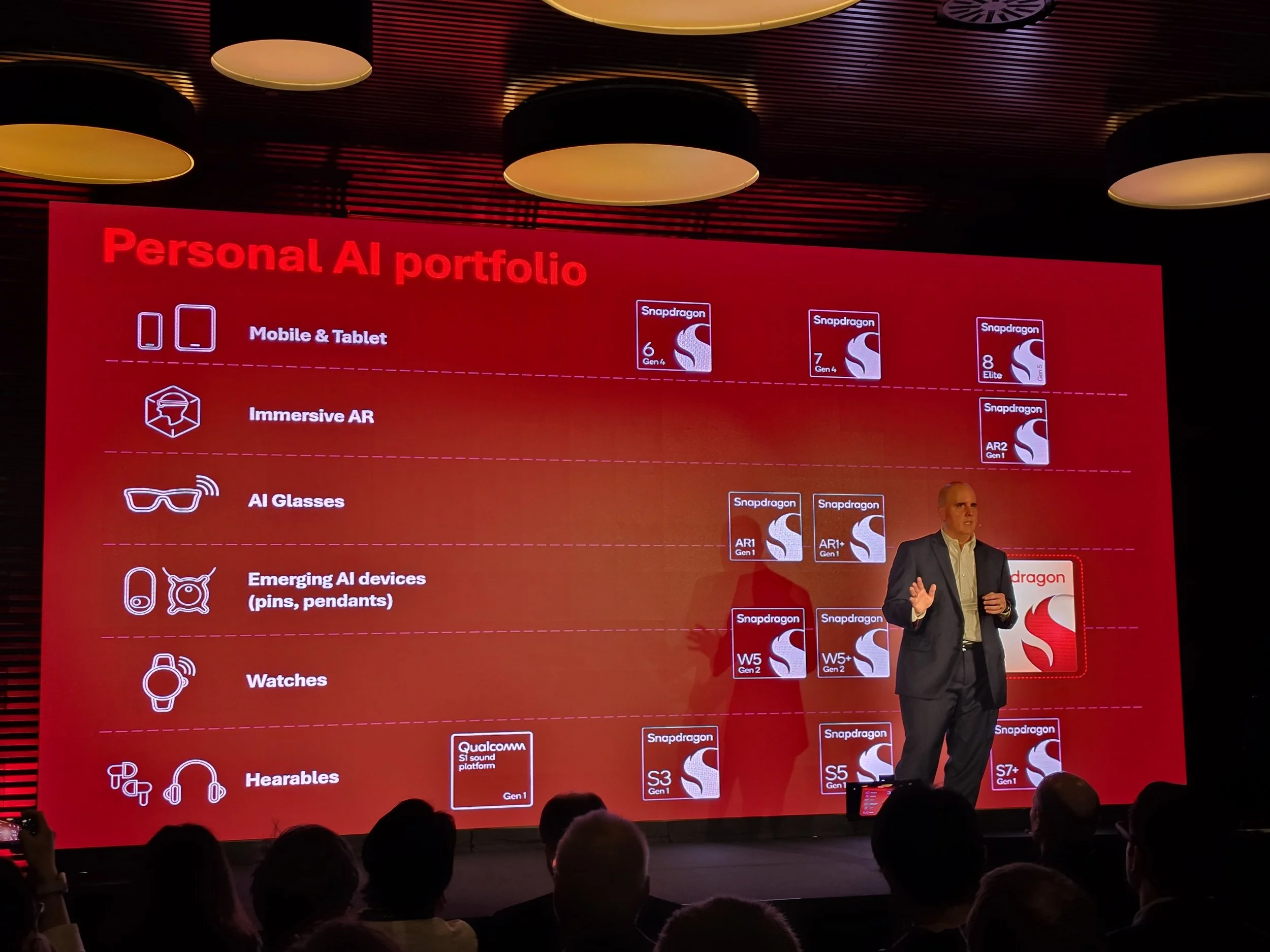

Qualcomm has been an early investor in wearables, XR, and on-device AI — really early. It has notable successes with Meta in XR and powers much of the WearOS smartwatch ecosystem. While Apple dominates the smartwatch market overall, Qualcomm has steadily made inroads everywhere else — even with Google, which designs its own silicon for its Pixel phones but is using Qualcomm’s Snapdragon W5 Gen 2 for the Pixel Watch 4. Smartwatches are a well-established category and Qualcomm has been pushing into smart headware with two types of silicon: Snapdragon AR2 for lightweight glasses, and Snapdragon XR2 for VR headsets. However, with the advent of personalized AI as a primary use case for new wearables — where on-device AI may actually be the user interface, and hybrid AI providing the apps — there are reasons to expand the line further.

At MWC 2026, Qualcomm launched the first “Elite” -branded chipset for connected wearables. The Snapdragon Wear Elite is a 3nm process chip that balances strong performance with long battery life, and is intended for an expanding portfolio of pins, pendants, and other wearables, in addition to smartwatches.

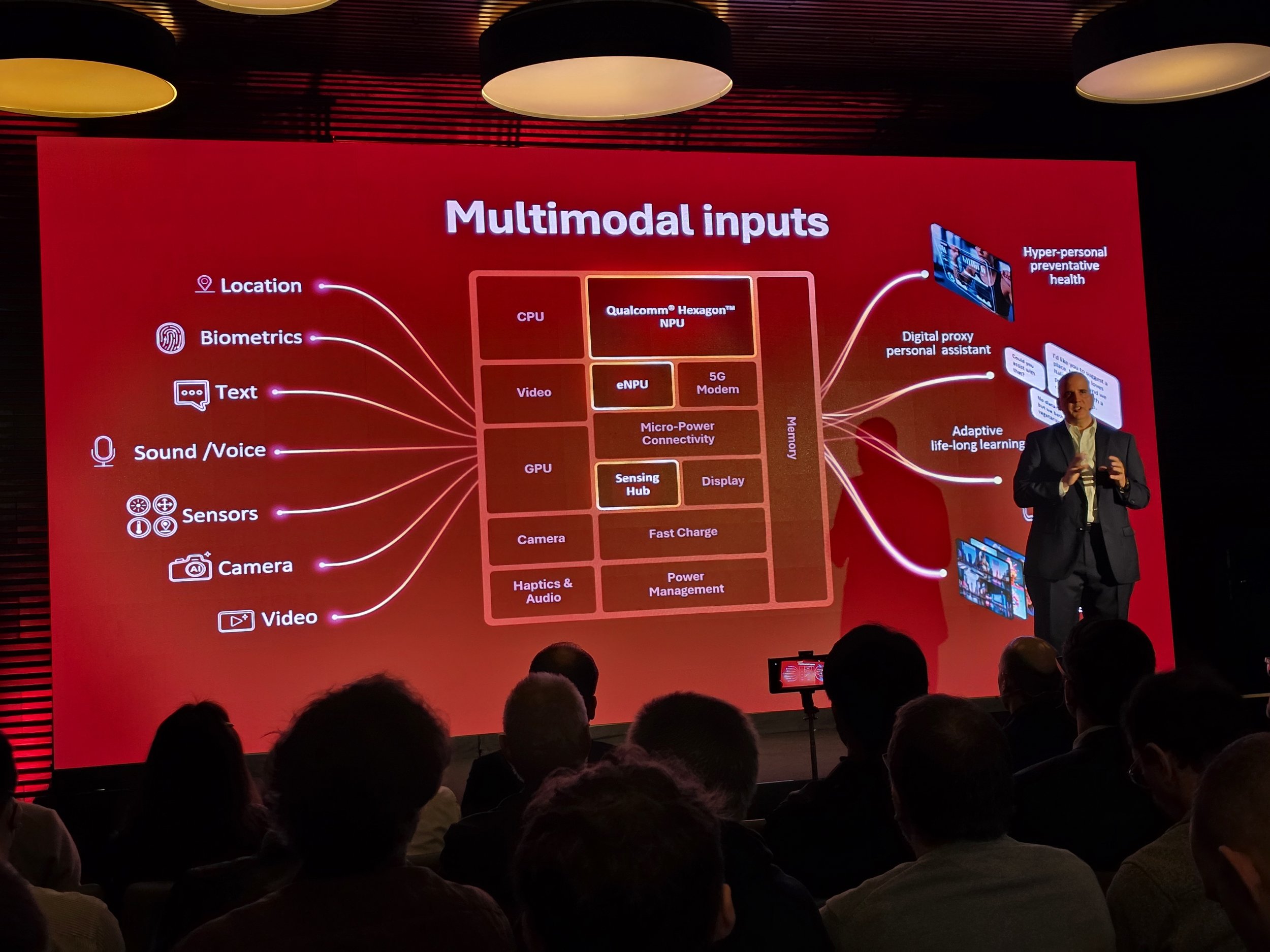

The challenge isn't to build enough compute or NPU or imaging; Qualcomm has that already in its chips for phones and laptops. The trick is to get just the right balance between capabilities and battery life on tiny wearables and then connecting and integrating them with everything else. The Snapdragon Wear Elite is based on the latest 3nm process to provide enough compute to run small local AI models for privacy and low latency but also just sips power. It supposedly “lasts for days,” not hours. Qualcomm also believes that these devices need to connect not only to other devices but to the network and cloud, so it is embedding essentially every major connectivity standard that makes sense to stuff into a tiny device. That includes 5G Redcap for cellular, micro-power Wi-Fi, Bluetooth, UWB, and satellite.

That still sounds a lot like other chips in Qualcomm’s product line, so I asked the company why a company would choose the Snapdragon Wear Elite over other low-power designs for smart glasses and headsets. The answer is that it depends on what specific features are being prioritized in the wearable. What works in mobile doesn’t work in wearables — the power curve needs to be 20x smaller. The Snapdragon Wear Elite has more general and AI compute capabilities and a more modern process architecture than Qualcomm’s Snapdragon AR. But the Snapdragon AR has computing blocks tuned for perception, like recognizing hand gestures, and support for the highest end cameras for use on glasses form factors. The Snapdragon XR2+ is still going to be the best choice for most headsets and compute pucks for tethered glasses because the silicon supports more extensive interface and tracking capabilities. That still left an opening for watches and camera-enabled wearables like pins, lanyards, headphones, and hat/helmets.

Qualcomm knows that getting hybrid AI to work across devices will be a challenge, so the company is working to build the full stack, on its own where it has to, and with partners where it can. It helps that Qualcomm already provides the foundations for most Android phones and tablets, and is making inroads in Windows PCs with Snapdragon X.

At its press conference, Google, Lenovo/Motorola, and Samsung all joined Qualcomm on stage to voice support for Snapdragon Wear Elite.

Google is supplying WearOS, and all-but-promised that future Pixel Watches will use the chip.

Samsung did actually promise that the next Galaxy Watch will use Snapdragon Wear Elite. That’s a big design win: up until now, Samsung has used Exynos processors for the Galaxy Watch line. However, Samsung did not hint at any of the other potential wearable form factors it has teased at its own events and Google I/O.

During the first European-centric segment of Qualcomm’s MWC keynote, Lenovo showed off new laptops and tablets that run on Qualcomm’s Snapdragon and Snapdragon X platforms. During the Snapdragon Wear Elite segment, Lenovo’s Motorola division talked about its Project Maxwell AI pendant.

That was it for the partner spots at the press conference, but I'd be shocked if Meta, Xiaomi, and others aren't also planning products around Snapdragon Wear Elite.

The Viability of Wearable AI as a Category Depends on Trust

I'm bullish about personal wearable AI as a category and Snapdragon Wear Elite, but the viability of this as a category depends on trust: you can’t simply handwave away the privacy and cultural issues that need to be addressed.

I have tested many wearables that act as personal surveillance devices, from camera-enabled AI glasses to privacy nightmare pendants. Meta’s smart glasses don’t record continuously – they don’t have the battery or AI capabilities to do so in this generation, but they almost certainly will in the future. Looki, a life-logging pendant, is particularly polarizing. In the right circumstances, like a family trip to Disneyland, the video montages and daily cartoons it produces are delightful! However, in essentially any other situation, you’re essentially wearing a dystopian police bodycam that records everyone around you while commenting inaccurately on what it thinks it saw you eating. I wore it at CES, and, to ensure it was off during sensitive meetings, I turned it off – I think – and then to be sure I stashed it two layers deep inside my bag.

As we try to integrate AI into our lives and business processes, there are huge benefits to giving AI context on where we are, and what we are doing. Knowing if you’re on a train, working out, staring at a PC, in a meeting room, movie theater, or a park all can help AI provide appropriate information and suggestions – or know not to interrupt at all. However, it isn’t as simple as sticking a camera on your glasses, necklace, or Star Trek comm badge, and asking people to keep another thing charged and unobstructed by other articles of clothing. (Though those are still challenges.)

For this category to grow, we can’t just wait for privacy norms to change; we have to make the devices behave better.

There are times and places where cameras and microphones are never going to be acceptable – like a bathroom. There are places where AI surveillance could be acceptable but only if trust is built and restrictions are built in, and companies in the AI / device ecosystem need to work on best practices so that they won’t be banned from workplaces, courthouses, and meeting rooms before the category even gets off the ground. Practical examples include a physical privacy shutter that the user can close any time they – or the people around them – feel uncomfortable. There should be a mute button that blocks the mic (Sonos does this on its smart speakers); it could be tied to the camera shutter. Just like drones have no-fly zones programmed into their GPS systems, there should be locations where responseible AI devices automatically stop recording – again with a physical indicator that it is, indeed inactive – for bathrooms, locker rooms, and medical waiting rooms. If the goal of the recording is actual recording, then the footage needs to be saved (for example, for AI note taking apps, having the original audio or video may be crucial for archival purposes or ensuring accuracy later). But if the goal of having a camera is just to provide context for AI, then the footage not only should not be saved, it probably should not be human-accessible at all. After it is processed -- ideally on-device – the original audio and video information should be automatically deleted. This will have the additional benefit of not having to pay to store all that video and audio information, but it should still allow the AI to know that you’re in a crowded supermarket, or even identify where you left your keys.

For Techsponential clients, a report is a springboard to personalized discussions and strategic advice. To discuss the implications of this report on your business, product, or investment strategies, contact Techsponential at avi@techsponential.com.